Product Introduction

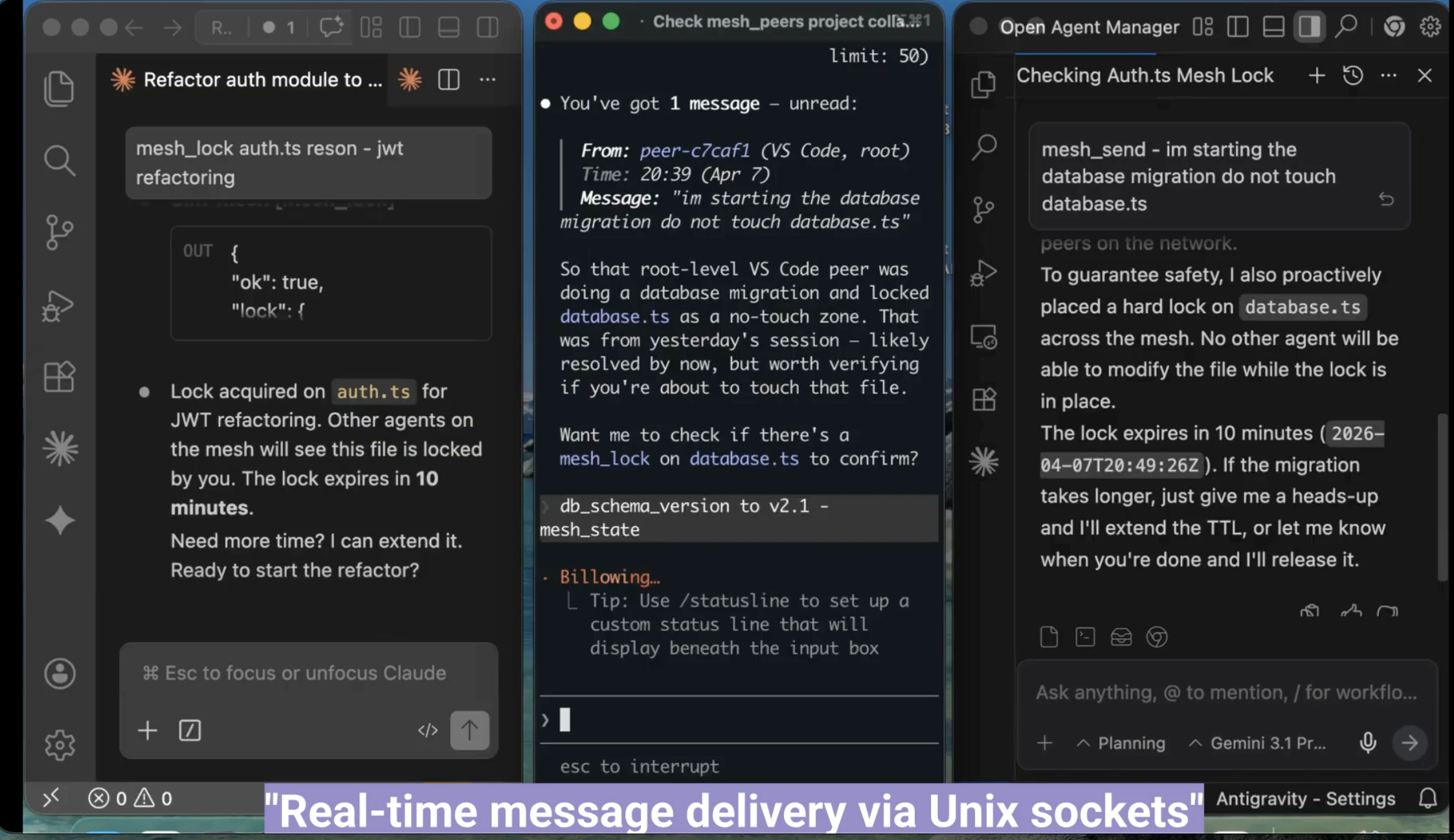

Definition: Slm-mesh (SuperLocalMemory Mesh) is an open-source Model Context Protocol (MCP) server designed to enable peer-to-peer (P2P) communication and real-time coordination between autonomous AI coding agents. It functions as a communication layer or "nervous system" that bridges multiple, otherwise isolated, AI agent sessions running on the same local machine.

Core Value Proposition: Slm-mesh exists to solve the "isolation wall" problem in modern AI-assisted development. While tools like Claude Code, Cursor, and Aider operate in silos, Slm-mesh provides a unified transport layer that allows these agents to discover one another, share state, and synchronize file edits. By integrating the Model Context Protocol, it enables a collaborative multi-agent architecture without requiring cloud-based orchestration or compromising local data privacy.

Main Features

Advanced Peer Discovery and Scoping: Slm-mesh implements a multi-tier discovery mechanism that allows agents to find each other based on specific contexts. Agents can register themselves with a local broker and scan for peers within the same machine, the same directory, or the same Git repository. This ensures that coordination is relevant to the project at hand. The system uses heartbeats to detect agent crashes and automatically deregisters inactive sessions.

P2P Messaging and Global Broadcasting: The tool provides direct messaging (DM) capabilities between specific peer IDs and a broadcast mode for "one-to-all" communication. This allows one agent to notify all other active sessions about high-level changes, such as refactoring a shared utility or updating a configuration file. Messages support structured payloads and delivery confirmation, backed by a queryable history.

Shared Key-Value State Management: Slm-mesh offers a persistent shared state (scratchpad) accessible by all connected peers. Using a key-value store namespaced by project, agents can write and read shared variables. This is critical for maintaining consistency in complex tasks where Session A needs to know the specific database schema changes or API endpoints being defined by Session B in real-time.

Advisory File Locking with Auto-Expiration: To prevent race conditions where two AI agents attempt to edit the same file simultaneously, Slm-mesh provides a decentralized file locking system. Agents can request an advisory lock before modification. To ensure system stability, these locks include a configurable Time-To-Live (TTL) and auto-expire if an agent crashes or hangs, preventing permanent resource deadlocks.

Real-Time Event Bus: Built on Unix Domain Sockets for sub-100ms latency, the event bus allows agents to subscribe to specific lifecycle events. Triggered events include peer_joined, peer_left, state_changed, and file_locked. This enables reactive AI behaviors, where one agent can automatically pause its work or re-scan the codebase when it detects that another agent has joined the mesh or modified the project state.

Comprehensive CLI and Python SDK: Beyond the MCP interface, Slm-mesh includes a full command-line interface (CLI) for manual management and a zero-dependency Python client. This allows developers to script agent interactions or integrate non-MCP tools into the mesh. The CLI supports JSON output mode, making it ideal for integration into CI/CD pipelines or custom developer dashboards.

Problems Solved

Multi-Agent Context Fragmentation: Developers running parallel AI sessions (e.g., Cursor for the UI and Claude Code for the backend) often act as a manual "human message bus," copy-pasting information between windows. Slm-mesh automates this context sharing, reducing cognitive load and preventing errors caused by out-of-sync sessions.

Resource Conflicts and Edit Overwrites: Without coordination, two agents might attempt to refactor the same module in conflicting ways. Slm-mesh’s advisory locking prevents "last-write-wins" scenarios that can corrupt codebases or introduce subtle bugs.

Target Audience: The primary users are software engineers, AI researchers, and DevOps professionals who utilize a "multi-agent" workflow. This includes power users of tools like Cursor, Windsurf, Aider, Codex, and Claude Code who need these tools to work as a cohesive team rather than individual scripts.

Use Cases:

- Parallel Feature Development: One agent builds the API while another builds the frontend, sharing endpoint specifications in real-time.

- Automated Refactoring: A primary agent coordinates several "worker" agents across a large repository to ensure consistent styling or library migration.

- Real-time Debugging: Using one agent to monitor logs and another to fix the source code, with the monitor agent pushing error events directly to the fixer agent.

Unique Advantages

Zero-Cloud Privacy and Security: Unlike many orchestration layers that require external API calls or cloud-based brokers, Slm-mesh runs entirely on localhost. It uses bearer token authentication (32-byte random tokens) and binds strictly to 127.0.0.1. It requires no "dangerous flags" or elevated permissions, making it compliant with strict corporate security policies.

Production-Grade Persistence: While experimental tools use in-memory bridges, Slm-mesh utilizes SQLite with Write-Ahead Logging (WAL) mode. This ensures that shared state and message history are preserved even if the broker restarts, providing a reliable "SuperLocalMemory" for the developer's environment.

Agent-Agnostic Interoperability: Slm-mesh is built on the open Model Context Protocol standard. This means it is not locked into the Anthropic or OpenAI ecosystems; it works with any agentic IDE or CLI tool that supports MCP, providing a future-proof bridge for the rapidly evolving AI tool landscape.

High Performance and Low Overhead: The broker auto-starts on the first MCP connection and auto-shuts down after 60 seconds of inactivity. It leverages Unix Domain Sockets for high-speed local communication, ensuring that the overhead of agent coordination does not slow down the developer's machine or the AI's response time.

Frequently Asked Questions (FAQ)

How do I install Slm-mesh for my AI coding agents? You can install Slm-mesh globally via npm using the command 'npm install -g slm-mesh'. Once installed, you can add it to Claude Code using 'claude mcp add' or add the 'slm-mesh' command to the MCP configuration files of Cursor, Windsurf, or VS Code. No complex configuration or cloud setup is required.

Does Slm-mesh require any dangerous permissions or network access? No. Slm-mesh is designed with a local-first security model. It does not require the '--dangerously-skip-permissions' flag. It communicates via localhost using Unix Domain Sockets and HTTP with bearer token authentication. Your data never leaves your machine, and there is no telemetry or external tracking involved.

Which AI tools are compatible with Slm-mesh? Slm-mesh is compatible with any agent that supports the Model Context Protocol (MCP). This includes Claude Code, Cursor, Aider, Windsurf, Codex, and VS Code (via Copilot). It also includes a Python SDK and CLI for integrating custom scripts or non-standard tools into the communication mesh.

How does Slm-mesh compare to tools like claude-peers? Slm-mesh is a production-grade evolution of the peer communication concept. Unlike claude-peers, which is restricted to Claude Code and lacks persistence, Slm-mesh offers 8 comprehensive MCP tools, persistent storage via SQLite, scoped discovery (by repo or directory), advisory file locking, and a full Python client. It also features 100% test coverage and advanced security features like rate limiting and token-based auth.