Product Introduction

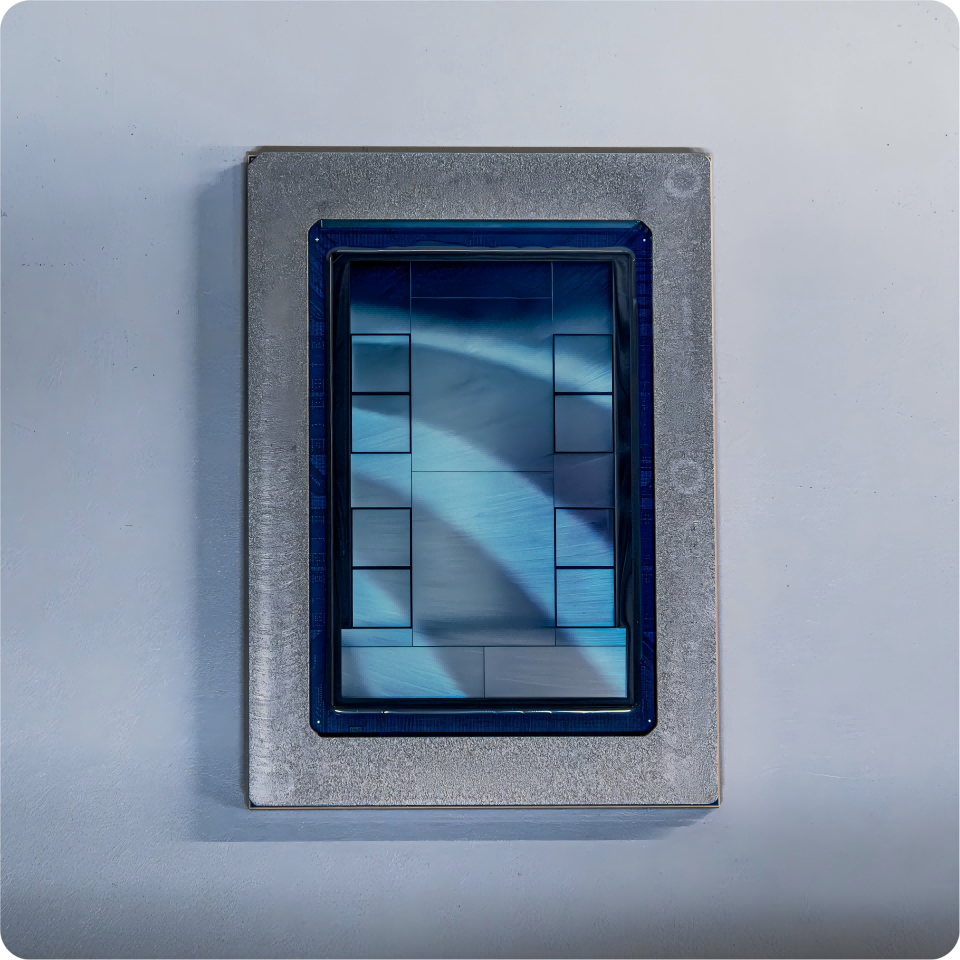

Definition: The MTIA 300 (Meta Training and Inference Accelerator) is a custom-designed AI silicon chip developed by Meta in partnership with Broadcom. It is categorized as a high-performance, domain-specific accelerator (DSA) optimized for handling the massive computational demands of Ranking and Recommendation (R&R) models and Generative AI (GenAI) workloads within hyper-scale data center environments.

Core Value Proposition: The MTIA 300 exists to provide a cost-effective, energy-efficient alternative to general-purpose GPUs for powering AI-driven experiences for billions of users. By utilizing an "inference-first" design philosophy and native PyTorch integration, it allows Meta to scale its AI infrastructure without the prohibitive costs and power constraints associated with traditional commercial silicon. It serves as the architectural foundation for Meta's high-velocity silicon roadmap, which includes the subsequent MTIA 400, 450, and 500 generations.

Main Features

Modular Chiplet Architecture: The MTIA 300 utilizes a multi-chiplet design consisting of one compute chiplet, two dedicated network chiplets, and multiple High-Bandwidth Memory (HBM) stacks. This modularity allows for improved manufacturing yields and the ability to upgrade specific components, such as I/O or networking, independently of the core compute logic.

Advanced Processing Element (PE) Grid: Each compute chiplet features a grid of specialized Processing Elements. Each PE integrates two RISC-V vector cores for general-purpose flexibility, a Dot Product Engine for high-throughput matrix multiplication, a Special Function Unit for activations and elementwise operations, and a dedicated Reduction Engine for inter-PE communication and accumulation.

Integrated High-Bandwidth Networking: Unlike traditional accelerators that rely on external NICs, the MTIA 300 features built-in NIC chiplets and dedicated message engines. This design offloads communication collectives from the main compute cores, using near-memory compute for reduction-based collectives to minimize latency and maximize throughput across scale-out network configurations (up to 200 GB/s).

PyTorch-Native Software Stack: The hardware is co-designed with a comprehensive software ecosystem that supports both eager and graph modes. It integrates directly with PyTorch 2.0’s compilation pipeline (TorchInductor), utilizing Triton-based kernel compilers and MLIR backends to translate high-level Python code into optimized device-specific machine code without requiring manual kernel rewrites.

Problems Solved

High Infrastructure TCO (Total Cost of Ownership): Commercial GPUs are often over-engineered for specific inference tasks, leading to wasted power and capital. MTIA 300 addresses this by optimizing hardware specifically for Meta’s dominant workloads, significantly reducing the cost per inference.

Latency in Scale-Out AI Models: Large-scale recommendation models often suffer from communication bottlenecks. The MTIA 300’s integrated communication library (HCCL) and dedicated message engines solve this by offloading collective operations, ensuring that data movement does not stall compute cycles.

Hardware-Software Decoupling: Traditional hardware often lags behind software innovations. MTIA 300's use of the Triton DSL and a Rust-based user-space driver allows developers to quickly adapt to new model architectures and low-precision data types (like MX8/MX4) as they emerge in the research community.

Target Audience: This product is designed for internal use by Meta’s AI Infrastructure Engineers, Machine Learning Researchers, and Data Center Architects who manage the hardware-software lifecycle for products like Llama, AI assistants, and personalized feed ranking.

Use Cases: The MTIA 300 is essential for large-scale Recommendation and Ranking (R&R) training, GenAI inference optimization, and serves as the deployment vehicle for production-grade models that require sub-millisecond response times at billion-user scales.

Unique Advantages

Inference-First Differentiation: While mainstream GPUs (like the NVIDIA H100) are designed primarily for large-scale pre-training, the MTIA series is built from the ground up for inference. This ensures higher utilization rates and better performance-per-watt for the "serving" phase of the AI lifecycle, where the majority of operational costs reside.

High-Velocity Iteration Strategy: Meta has established a capability to ship new MTIA iterations approximately every six months. This strategy allows for the rapid adoption of new process nodes and HBM technologies, ensuring the hardware remains aligned with the fast-evolving landscape of GenAI model architectures.

Frictionless Developer Adoption: By utilizing vLLM plugins and industry-standard OCP (Open Compute Project) rack designs, MTIA 300 can be deployed seamlessly into existing data centers. Developers can migrate models from GPUs to MTIA with zero code changes thanks to the deep integration with the PyTorch 2.0 compiler stack.

Frequently Asked Questions (FAQ)

What is the difference between MTIA 300 and MTIA 400? The MTIA 300 was initially optimized for Ranking and Recommendation (R&R) workloads, featuring a cost-effective foundation with a single compute chiplet. The MTIA 400 evolved to support GenAI more aggressively, offering 400% higher FP8 FLOPS, 51% higher HBM bandwidth, and a larger 72-accelerator scale-up domain.

Does MTIA 300 support Large Language Models (LLMs) like Llama? Yes, while MTIA 300 was designed with a focus on R&R training, it has been successfully tested and deployed to run Llama-based models. Subsequent generations like the MTIA 450 and 500 further optimize this performance by doubling HBM bandwidth specifically for the "decode" phase of GenAI inference.

How does the MTIA software stack ensure model portability? MTIA uses a PyTorch-native approach where the MTIA graph compiler is built on Torch FX IR and TorchInductor. This means any model that can be compiled using

torch.compilecan run on MTIA without architectural rewrites, allowing models to run simultaneously on both GPUs and MTIA clusters.Is MTIA 300 available for external commercial purchase? Currently, the MTIA 300 and its successors are part of Meta’s internal custom silicon strategy. They are designed to optimize Meta’s own infrastructure costs and power efficiency for its suite of apps and services, rather than as a retail product for the general market.