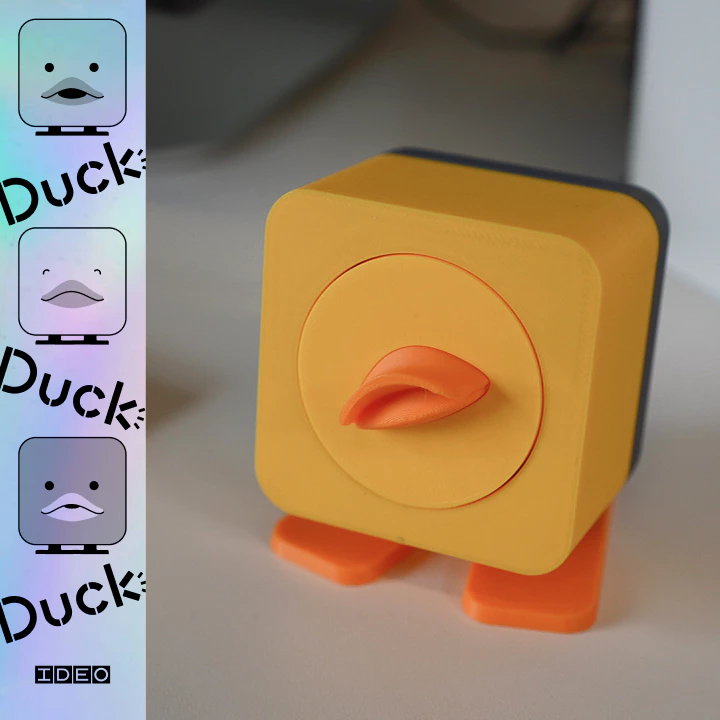

Product Introduction

- Definition: Duck, Duck, Duck! by IDEO is an advanced AI-integrated hardware and software interface designed for "Rubber Duck Debugging." Technically, it is a multimodal ambient computing tool that leverages Anthropic's Claude Large Language Model (LLM) to monitor coding environments and provide real-time verbal and physical feedback.

- Core Value Proposition: This product transforms the traditional, passive practice of rubber ducking into an active, collaborative AI experience. By integrating voice recognition and environment-aware AI, it aims to reduce developer cognitive load, identify logic errors through verbalization, and improve software quality via proactive, "opinionated" code reviews.

Main Features

- Claude-Powered Contextual Analysis: The system utilizes the Claude API to analyze real-time code execution, terminal outputs, and developer speech. Unlike static linters, this feature understands the intent behind the code, allowing the AI to question logic, identify potential edge cases, and suggest refactoring strategies based on the current state of the repository.

- Ambient Voice-First UI (VUI): The product features a sophisticated voice interface that listens for developer explanations. Using Natural Language Processing (NLP) and speech-to-text (STT) technology, it identifies when a developer is struggling or when a process (like a test suite) fails. It allows for "voice approval," where users can authorize terminal commands or code changes without removing their hands from the keyboard.

- Physical Actuation and Emotional Feedback (Robot Optional): For users with the physical hardware component, the duck uses mechanical actuators to react to code events. It can nod in agreement, shake its head at poor variable naming, or exhibit "hesitation" through movement when it detects high cyclomatic complexity or potential bugs in a function.

Problems Solved

- Cognitive Blind Spots: Developers often overlook simple errors when they are too close to a project. Duck, Duck, Duck! solves this by acting as an objective, external auditor that forces the developer to explain their logic, which the AI then validates against the actual code.

- Target Audience: The primary users are Software Engineers, Full-stack Developers, and DevOps professionals who utilize rubber ducking techniques. It is also highly relevant for Junior Developers seeking an interactive learning aid and Hardware Enthusiasts interested in AI-driven robotics.

- Use Cases:

- Live Debugging Sessions: Using the duck to "talk through" a complex recursion error.

- Code Quality Audits: Receiving immediate, opinionated feedback on variable naming and architectural patterns.

- Hands-Free Workflow Management: Approving deployment or build scripts via voice while multitasking within the IDE.

Unique Advantages

- Differentiation: Most AI coding assistants (like GitHub Copilot) are reactive and text-based. Duck, Duck, Duck! is proactive and multimodal. It moves the AI interaction out of the sidebar and into the physical or auditory space, reducing "screen fatigue" and making the AI feel like a peer rather than just a search engine.

- Key Innovation - The "Opinionated" Feedback Loop: IDEO has intentionally designed the AI to be critical. By occasionally questioning decisions or criticizing variable names, the duck stimulates the developer’s critical thinking. This "friction-as-a-feature" approach ensures that the developer is truly engaged with their own logic, rather than mindlessly accepting AI suggestions.

Frequently Asked Questions (FAQ)

How does the AI in Duck, Duck, Duck! understand my code? The tool monitors your active IDE window and terminal output while listening to your verbal explanations. It processes this data through Claude’s high-context window to understand the relationship between what you say and what you have actually written, providing a holistic review of your technical logic.

Is a physical robot required to use Duck, Duck, Duck!? No, the robot is optional. The software-only version provides the same high-level AI analysis, voice interaction, and "opinionated" feedback through your computer's audio interface. The physical robot serves as a tactile extension for users who want physical feedback (like nodding or movement).

Does Duck, Duck, Duck! integrate with specific IDEs? The tool is designed to be environment-aware, typically integrating via CLI or background processes that monitor terminal activity and code changes. This allows it to function across various editors such as VS Code, IntelliJ, or even Vim, as long as the AI has access to the local development context.